Self-Hosted LLM vs API: The Real Cost and Security Trade-offs for Enterprise in 2026

There is a conversation happening in every CTO's office right now. Someone from engineering has done the math: GPU rental is $2/hour, API tokens cost $15 per million outputs, therefore self-hosting will save the company money. They might be right. They are more likely wrong. And the difference between those two outcomes is several hundred thousand dollars and months of engineering time.

This is not a philosophical debate about open-source versus proprietary AI. It is a math problem, and most teams are solving it with incomplete data.

The Short Answer Nobody Wants to Hear

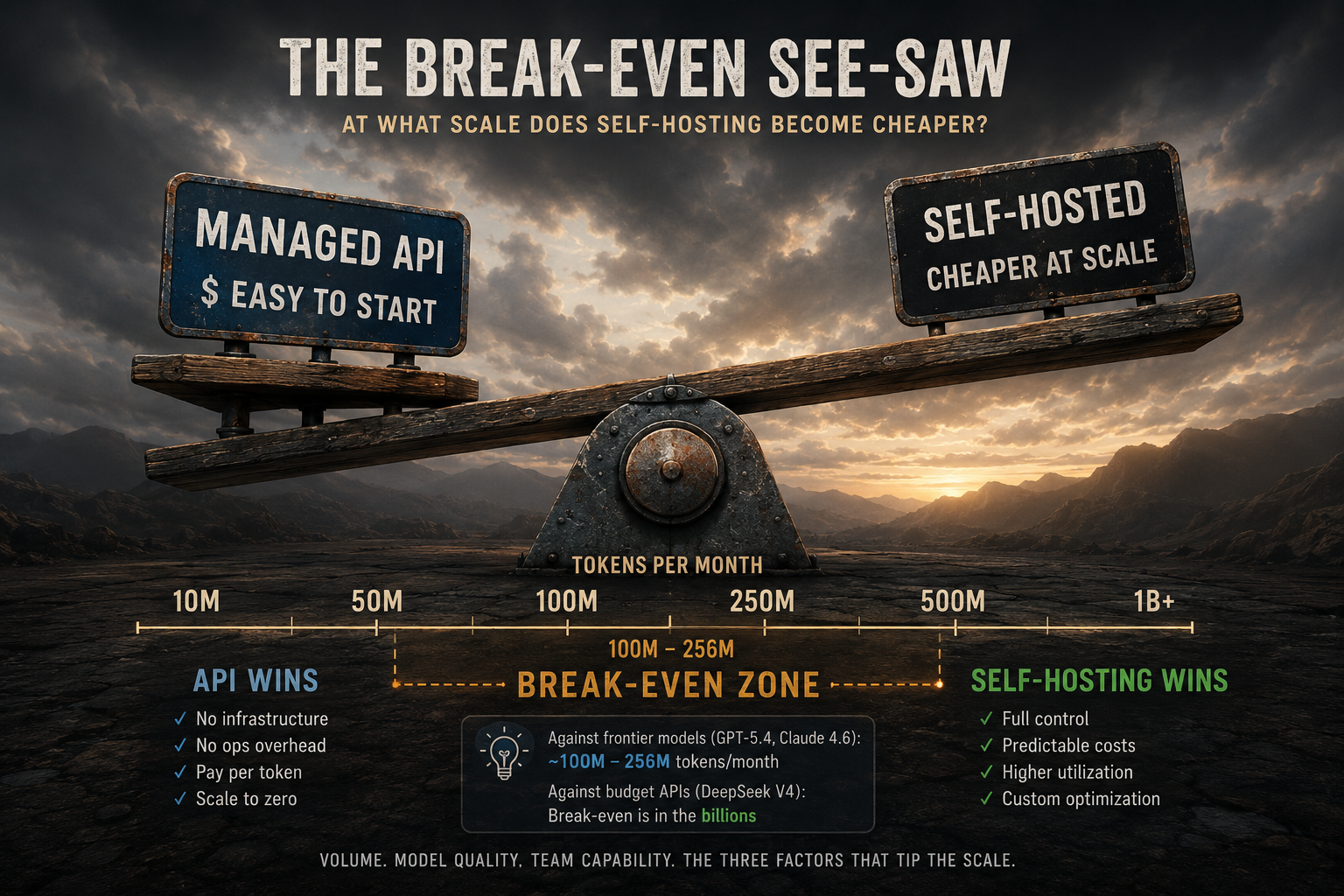

For the vast majority of enterprise teams in 2026, managed APIs are cheaper than self-hosting once you account for the full cost stack. The break-even point against frontier models (GPT-5.4, Claude Sonnet 4.6) sits at roughly 100 to 256 million tokens per month, a threshold most production systems never reach. Against budget-tier APIs like DeepSeek V4 at $0.14 per million tokens, the math barely ever flips.

The exception is real and important: regulated industries. Healthcare, finance, and legal teams often have no choice. HIPAA mandates, GDPR data residency requirements, and attorney-client privilege concerns can make self-hosting (or a private cloud endpoint) the only compliant option, regardless of cost.

Everything else comes down to volume, team capability, and how honest you are about the hidden costs.

What the Market Looks Like in 2026

Enterprise LLM API spend hit $8.4 billion in 2025, more than doubling within six months. Inference costs now dominate AI budgets, having overtaken training spend as the primary line item. Nearly 78% of large organizations have deployed or are actively evaluating generative AI systems.

Three providers control 88% of enterprise API spend: Anthropic (~40%), OpenAI (~27%), and Google (~21%). Open-weight models hold just 11% of enterprise market share, down from 19% in 2024, despite their rapid quality improvement. The quality gap between open-weight and frontier closed models has narrowed to roughly six months of capability difference, and that gap continues to close.

The pricing landscape now spans a 600x range, from $0.10 per million tokens (GPT-4.1 Nano) to $60 per million tokens for frontier reasoning models like o3. Model selection alone can swing monthly costs by 10x or more.

GPT-5.4: $2.50 input / $15.00 output per 1M tokens Claude Sonnet 4.6: $3.00 input / $15.00 output per 1M tokens Gemini 2.5 Pro: $1.25 input / $10.00 output per 1M tokens GPT-5-mini: $0.125 input / $1.00 output per 1M tokens Gemini 2.5 Flash: $0.15 input / $0.60 output per 1M tokens DeepSeek V4: $0.14 input / $0.28 output per 1M tokens GPT-4.1 Nano: $0.10 input / $0.40 output per 1M tokens

Prices as of May 2026. Verify at provider pricing pages before budgeting.

The Three Deployment Modes

Before diving into the cost analysis, it is worth being precise about what we are actually comparing. There are three distinct paths, not two.

Managed API means calling OpenAI, Anthropic, Google, or open-model hosts like Together AI and Groq over HTTPS and paying per token. Zero setup cost. Variable pricing that scales linearly with usage. Instant access to the latest models. No infrastructure to maintain.

Managed Cloud AI (Azure OpenAI, AWS Bedrock, GCP Vertex AI) is the middle path. You get the same frontier models served from within your cloud boundary, with VNet integration, private endpoints, IAM controls, and enterprise SLAs. The trade-off: a 15 to 40% pricing markup over direct API access, plus $200 to $2,000 per month in cloud infrastructure overhead. For most regulated enterprises with an existing Azure or AWS estate, this is the right starting point.

Self-hosted means running open-weight models (Llama 4, DeepSeek V4, Mistral) on your own GPU infrastructure using inference runtimes like vLLM or TGI. You control everything: the model version, the data, the latency, the audit trail. You also own every infrastructure failure, security patch, and model update.

Our AI and cloud integration practice works across all three modes, helping clients select and architect the right approach for their workload and compliance profile.

Where the Break-Even Actually Is

The most misunderstood question in enterprise AI infrastructure: at what scale does self-hosting become cheaper?

A single A100 GPU at roughly $2/hour on cloud rental, running Llama 4 70B with vLLM at approximately 1,500 tokens per second average throughput, costs approximately $1,500/month in raw GPU time. Against GPT-5.4 at a blended $5.63 per million tokens (50/50 input/output split), break-even is around 256 million tokens per month, about 8.5 million tokens per day.

That sounds achievable. But the comparison is misleading, because Llama 4 70B and GPT-5.4 are not the same model. If your workload genuinely requires GPT-5.4-quality output, self-hosting is not yet an option: the open-weight equivalents are not there.

Against budget-tier APIs, the math becomes even less favorable for self-hosting. When DeepSeek V4 charges $0.14 per million input tokens, the break-even for self-hosting a comparable open model is measured in billions of tokens per month. At those volumes, you are not a startup; you are running infrastructure at the scale of a major tech platform.

Below 50M tokens/month: API wins in almost every scenario. 50M to 500M tokens/month vs frontier APIs: A genuine decision point, but only with dedicated MLOps capacity. Above 500M tokens/day vs comparable open models: Self-hosting delivers up to 5x cost savings. Any volume with budget APIs (DeepSeek, Gemini Flash): API wins; self-hosting is rarely justified.

The Hidden Costs GPU Ads Don't Mention

Here is the number that changes most cost models: self-hosting costs 3 to 5x more than the raw GPU rental price once you factor in everything. The line items most teams forget:

Engineering labor. A self-hosted LLM deployment requires 10 to 20 hours per month at minimum for maintenance, monitoring, and troubleshooting. At $75 to $150 per hour for a senior DevOps or ML engineer, that is $750 to $3,000 per month in labor before any incident response. A full-time MLOps engineer runs $145,000 per year in the US. Most teams underestimate this by 60%.

Model update cycles. New model versions drop every 6 to 8 weeks. Each update requires re-testing, re-validating, and re-deploying. With managed APIs, this is invisible. With self-hosting, it is roughly $12,000 per year in engineering time, assuming no complications.

GPU utilization waste. A GPU running at 10% utilization inflates your effective cost-per-token by 10x. Enterprise workloads are typically bursty: high demand during business hours, near-zero overnight. APIs scale to zero when idle. Your GPU cluster bills you regardless.

Power and cooling overhead. Data centers report actual power consumption at 1.5 to 2x the rated GPU TDP when accounting for cooling and power supply inefficiencies. On-premise hardware adds facilities management, physical security, and real estate costs on top of that.

Hardware depreciation. Over 36 months of straight-line depreciation, an enterprise H200 GPU at $30,000 loses $833 per month per unit. As open-weight model quality improves and new GPU generations arrive, hardware refresh cycles compress the effective depreciation window further.

Azure OpenAI infrastructure overhead. VNet integration, private endpoints, and managed identity setup add $200 to $2,000 per month in Azure infrastructure costs, a 15 to 40% markup over direct pricing. The average Azure OpenAI customer pays 22% more than equivalent direct API access. That markup buys real value: compliance, enterprise SLA, Microsoft support. But it needs to appear in the cost model.

Teams that start their cost analysis from raw GPU prices and API token rates are systematically underestimating self-hosting by a factor of 3 to 5x. Accurate total-cost-of-ownership modelling is the first thing our AI integration practice builds before any infrastructure recommendation.

Security: Neither Option Is Inherently Safer

The security question is not "self-hosted is private, API is risky." Both deployment models carry distinct threat surfaces. What matters is where your security competency lives and where your data boundary must be.

What managed APIs expose you to. Your prompts and completions traverse third-party infrastructure. Under enterprise agreements, major providers commit not to train on your data, but the data still moves through their systems. This matters for attorney-client privileged communications, protected health information, and proprietary intellectual property. Logging practices vary significantly between tiers: standard developer and free-tier agreements often permit training use, while enterprise agreements change this but require active negotiation and verification.

Shadow AI is now the most common entry point for enterprise data leakage. IBM's 2025 Cost of a Data Breach Report notes that shadow IT, including unsanctioned AI tools, has become a leading factor in enterprise incidents. As of 2026, 60% of organizations have no formal AI security policy addressing departments that independently connect sensitive business data to public LLM APIs without IT oversight. Single-provider dependency also creates business continuity risk: the January 2025 ChatGPT outage disrupted production systems for thousands of enterprises simultaneously.

What self-hosting exposes you to. Supply chain attacks are a real and underappreciated risk. In 2025, malicious model weights were identified on Hugging Face containing reverse shells hidden in the deserialization process. PyTorch's pickle deserialization is a well-documented attack vector: a malicious model can execute arbitrary code on the machine that loads it. Open-weight models also typically lack the content filtering, abuse detection, and output safety layers built into commercial APIs. Your organization becomes fully responsible for ensuring the model does not produce non-compliant output. There is no vendor safety team to escalate to.

Compliance: When the Decision Is Made for You

For regulated industries, the self-hosting vs. API question is often answered by law, not by spreadsheet.

HIPAA requires that Protected Health Information cannot leave HIPAA-covered infrastructure without a signed Business Associate Agreement. Most standard public LLM API offerings are not BAA-covered by default. OpenAI, Anthropic, and Microsoft (via Azure) do offer enterprise BAAs, but they require separate contracting and legal review. Self-hosting on your own infrastructure bypasses this entirely: PHI never leaves your environment.

GDPR requires organizations to know where personal data goes and who processes it. Using a public API makes your LLM provider a data processor under GDPR, requiring a Data Processing Agreement and compliance with data residency requirements. When Meta declined to release a model in the EU due to regulatory uncertainty in 2025, organizations with single-provider dependencies had no compliant fallback.

The EU AI Act reaches full enforcement in August 2026. High-risk AI systems must meet requirements for transparency, human oversight, audit trails, and explainability for automated decisions. Both API and self-hosted deployments must comply, but self-hosted infrastructure gives more control over the logging and audit layers required for regulated use cases.

SOC 2 and financial services frameworks require AI infrastructure to be included in compliance scope if it processes covered data. Customer PII and proprietary trading data routed through commercial APIs create documentation and control gaps that audit firms are increasingly flagging.

The practical test: if your data would require a BAA, a DPA, or a data residency guarantee, you need either Azure OpenAI with private endpoints, AWS Bedrock, GCP Vertex AI, or full self-hosting. Standard public API access is not compliant for these workloads. Marka has built compliant AI architectures across healthcare, finance, and public sector clients since 1993.

Vendor Lock-in: The Risk That Compounds Quietly

API-first architectures create lock-in that only becomes painful during migrations. The most dangerous form is not API syntax lock-in; it is behavioral lock-in, where your prompts, application logic, and team assumptions are tightly coupled to one model's specific output behavior.

67% of organizations state they want to avoid high dependency on a single AI provider. 45% say vendor lock-in has already hindered their ability to adopt better tools. 57% of IT leaders spent more than $1 million on platform migrations in the last year, and that was before AI infrastructure became mission-critical for most enterprises.

Lock-in operates at multiple layers simultaneously. At the model layer, API providers silently update model behavior: an output that worked perfectly last month may produce subtly different results today. At the infrastructure layer, Azure, AWS, and GCP each use different authentication systems, SDKs, and feature sets. Migrating between cloud providers is measured in weeks of engineering, not hours.

The mitigation is architectural: build model-agnostic systems from the start. Use abstraction layers like LiteLLM or Portkey that normalize provider APIs. Build on MCP (now a Linux Foundation standard) for agent-tool integration rather than proprietary orchestration. Design your application so the LLM can be swapped without rewriting the surrounding system.

The winning enterprise pattern in 2026 is multi-provider routing: send 70% of traffic to a cost-efficient model like DeepSeek V4, 25% to Claude Sonnet 4.6, and reserve frontier-tier reasoning for the 5% of requests that genuinely require it. Same overall output quality, roughly 15% of the cost of routing everything to frontier models.

How to Choose: A Practical Decision Framework

Run through these questions in order and stop when you have an answer.

1. Does your data contain PHI, PII, attorney-client, or classified information that cannot leave your infrastructure? If yes: you need Azure OpenAI private endpoint, AWS Bedrock, GCP Vertex AI, or full self-hosting. This is not negotiable.

2. Do you actually know your token volumes? If no: start with API. Instrument everything. Measure for 60 to 90 days before making any infrastructure commitment.

3. Are you processing more than 100 million tokens per month against frontier-tier models? If yes: run a full TCO model including DevOps labor, not just GPU rental. The math may still favor API depending on your team's capacity.

4. Do you have a dedicated MLOps engineer or team available right now? If no: do not self-host. The operational burden without experienced engineers will cost more in wasted GPU hours and debugging time than a year of API costs.

5. Does your workload require capabilities only available in closed frontier models, such as long context windows, multimodal reasoning, or very low hallucination rates? If yes: use Azure OpenAI or direct frontier API. Open-weight models cannot reliably serve these requirements yet.

The Architecture That Actually Works in 2026

For most enterprise teams, the answer is not a binary choice between "call OpenAI" and "run everything on your own GPUs." The practical architecture combines both.

Start with managed API. Use Azure OpenAI or direct provider API for exploratory work, early-stage products, and any workload where token volumes are unknown. The cost is justified by the speed of iteration and the absence of infrastructure risk.

Add a private cloud layer for compliance-sensitive workloads. Azure OpenAI with VNet integration and private endpoints gives you frontier model access within your security boundary. The 15 to 40% price premium is almost always worth it compared to the compliance and operational overhead of full self-hosting.

Introduce self-hosted open models for high-volume, well-defined tasks. Once a workload is stable, well-understood, and processing consistently high token volumes, running Llama 4 or Mistral on your own GPU infrastructure becomes cost-effective. Start with cloud GPUs, not on-premise hardware; preserve flexibility until volumes prove the case.

Route intelligently across all three. Use an LLM gateway (LiteLLM, Portkey, or equivalent) as the abstraction layer. Route routine structured tasks to cheap open-model APIs, standard production tasks to mid-tier frontier APIs, and complex multi-step reasoning to premium frontier models. Log everything: token counts, costs, latency, and output quality per route.

According to CloudZero's cost intelligence research, only 43% of organizations track AI spend by customer and just 22% track it by transaction. Without that granularity, rational infrastructure decisions are impossible. Measure AI spend per transaction, per customer, and per workflow, not just per month.

What This Means for .NET and Azure Teams

For organizations already running Microsoft's infrastructure stack, the decision framework simplifies considerably.

Azure OpenAI Service is not just an API wrapper; it is a compliance architecture. VNet integration, private endpoints, Entra ID identity management, and built-in SOC 2, ISO, and HIPAA compliance frameworks mean most of the regulated-industry requirements are satisfied without building custom infrastructure. A .NET-based system connecting to Azure OpenAI via private endpoint, with prompts and completions never leaving the Azure boundary, is a defensible architecture for most enterprise compliance reviews.

The cost trade-off is real: Azure OpenAI runs 15 to 40% higher than direct API access. But the alternative, building and maintaining a self-hosted inference stack, handling model updates, patching inference libraries, and staffing the operational overhead, is a significant ongoing commitment that requires MLOps capability most enterprise software teams do not have in-house.

Being a Microsoft Gold Certified Partner, Marka's practical recommendation for most .NET and Azure clients is to start with Azure OpenAI, instrument token volumes rigorously, and evaluate Azure AI Foundry with open-weight models only when sustained volumes above 100 million tokens per month are confirmed on specific workloads. You can review the cloud and AI services we provide or explore client projects where we have applied this pattern in production.

Full self-hosting is the right answer for a small number of organizations: those with strict air-gap requirements (defense, some government contracts), those with consistently extreme token volumes, and those with strong MLOps teams who can treat the infrastructure as a strategic long-term investment rather than a cost optimization shortcut.

The Takeaway

The math of self-hosted LLM vs. API in 2026 comes down to three variables: your data compliance requirements, your actual token volumes (not estimated), and your engineering team's operational capacity.

For most enterprise teams, managed API with a private cloud layer for sensitive workloads is the right architecture. Not because self-hosting is inherently bad, but because the true total cost, GPU rental plus engineering labor, model updates, utilization waste, depreciation, and infrastructure overhead, is 3 to 5x higher than the number that appears in the spreadsheet that started the conversation.

The teams that get this wrong spend six months and $200,000 building GPU infrastructure, only to find their actual production volumes do not justify it. The teams that get it right start with APIs, measure everything, and make the infrastructure investment only when the data proves it.